|

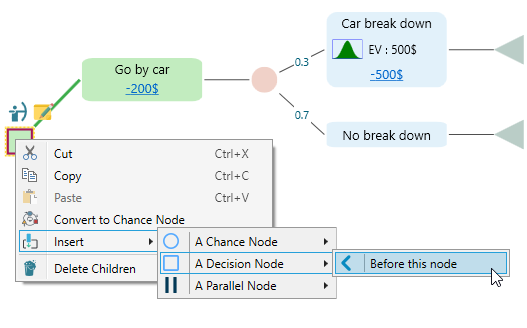

Why Python Python works on different platforms (Windows, Mac, Linux.ColorList = prism(100) % true (ground truth) class trueClassIndex = zeros(N,1) trueClassIndex(heart_scale_label=1) = 1 trueClassIndex(heart_scale_label=-1) = 2 colorTrueClass = colorList(trueClassIndex,:) % result Class resultClassIndex = zeros(length(predict_label),1) resultClassIndex(predict_label=1) = 1 resultClassIndex(predict_label=-1) = 2 colorResultClass = colorList(resultClassIndex,:) % Reduce the dimension from 13D to 2D distanceMatrix = pdist(heart_scale_inst,'euclidean') newCoor = mdscale(distanceMatrix,2) % Plot the whole data set x = newCoor(:,1) y = newCoor(:,2) patchSize = 30 %max(prob_values,2) colorTrueClassPlot = colorTrueClass figure scatter(x,y,patchSize,colorTrueClassPlot,'filled') title('whole data set') % Plot the test data x = newCoor(testIndex=1,1) y = newCoor(testIndex=1,2) patchSize = 80*max(prob_values,2) colorTrueClassPlot = colorTrueClass(testIndex=1,:) figure hold on scatter(x,y,2*patchSize,colorTrueClassPlot,'o','filled') scatter(x,y,patchSize,colorResultClass,'o','filled') % Plot the training set x = newCoor(trainIndex=1,1) y = newCoor(trainIndex=1,2) patchSize = 30 colorTrueClassPlot = colorTrueClass(trainIndex=1,:) scatter(x,y,patchSize,colorTrueClassPlot,'o') title('classification results') In this example, we will use the option enforcing n-fold cross validation in svmtrain, which is simply put the '-v n' in the parameter section, where n denote n-fold cross validation. Introduction to Decision Trees% colorList = generateColorList(2) % This is my own way to assign the color.don't worry about itPython can be used for rapid prototyping, or for production-ready software development. TreePlan and Decision Trees. Click a link for information related to TreePlan, SensIt, and SimVoi.

Decision Tree Software Manual And Theparameter selection using n-fold cross validation, both semi-manual and the automatic approach Big picture: In this scenario, I compiled an easy example to illustrate how to use svm in full process. Each code is built for some specific application, which might be useful to the reader to download and tweak just to save your developing time. Using multiclass ovr-svm with kernel: So far I haven't shown the usage of ovr-svm with kernel specific ('-t x'). The code can be found here. The observations are separated into n folds equally, the code use n-1 folds to train the svm model which will be used to classify the remaining 1 fold according to standard OVR.model = ovrtrainBot(trainLabel, , bestParam) Training: just add '-t x' to the training code cv = get_cv_ac(trainLabel, , cmd, Ncv) You can just add '-t x' to the code. For parameter selection using cross validation, we use the code below to calculate the average accuracy cv. The complete code can be found here. Using multiclass ovr-svm with kernel: So far I haven't shown the usage of ovr-svm with kernel specific ('-t x'). The code can be found here. The observations are separated into n folds equally, the code use n-1 folds to train the svm model which will be used to classify the remaining 1 fold according to standard OVR.model = ovrtrainBot(trainLabel, , bestParam) Training: just add '-t x' to the training code cv = get_cv_ac(trainLabel, , cmd, Ncv) You can just add '-t x' to the code. For parameter selection using cross validation, we use the code below to calculate the average accuracy cv. The complete code can be found here.

Personally I like to use the default kernel (RBF), which we don't need to make the kernel matrix X*X', which might contribute to a pretty quick speed. I think the slow part might be caused by which can be huge. However, I found that the code can be very slow in parameter selection routine when the number of class and the number of cross validation are big (e.g., Nclass = 10, Ncv=3). = ovrpredictBot(testLabel, , model) Microsoft office for mac school discountHow to obtain the SVM weight vector w: Please see the example code and discussion from StackOverflow. More details can be found here. Complete example for classification using train and test data set separately: This code works on the data set where the train and test set are separated, that is, train the model using train set and use the model to classify the test set. More details can be found here.

0 Comments

Leave a Reply. |

AuthorJose ArchivesCategories |

RSS Feed

RSS Feed